I’ve often commented that if human beings are the (or a) result, it wasn’t a very intelligent designer.

The most telling demonstration I’ve seen recently is a series of experiments conducted between 1959 and 1962, reported in wonderfully readable form in Morton Davis’s Game Theory: A Nontechnical Introduction. I recommend this book not only for its fascinating and wide-ranging coverage of a field that spans many disciplines, but for its lucid and engaging prose–and for the fact that it’s available in a ridiculously inexpensive Dover edition.

In these experiments pairs of people were pitted against each other in a series of games, starting with classic prisoners-dilemma setups. (Players can cooperate with each other or “defect.” The rewards vary based on a player’s choice and the other player’s choice. Generally you win more if you defect and the other player cooperates. But if you both defect, you both lose bigtime.)

One important aspect of these experiments: each pair played the same game against each other fifty times in a row. So they could learn about the other and the game, and adapt their strategies accordingly.

Not surprisingly, pairs had trouble getting to cooperate/cooperate solutions, because the temptation was always there to defect and snag the big win at the other’s expense.

What was surprising–and what demonstrates humans’ profound irrationality–was the results in games that had no dilemma–where an obvious, win-win cooperative solution was lying there on the table for both to see, and where either player did worse by defecting, no matter what the other player did.

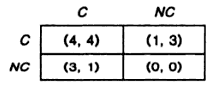

Here’s an example:

This matrix shows the results if each player cooperates or doesn’t. If they both cooperate, they get 4 each. If one defects, he gets 3 instead of 4, and the other gets 1. If they both defect, the both get zero. Both players understood this matrix. And they played “against” each other fifty times.

The results? In Davis’s words,

In game 7 the players cooperated 53 percent of the time, but in the last 15 trials they failed to cooperate more than half the time. [Davis’s italics.]

As Davis says, in this type of scenario “it was absurd to play uncooperatively.” But “even in the last game the outcome was very close to what you would expect if the players picked their strategies by tossing a coin. Moreover, as the game progressed the tendency to cooperate became weaker rather than stronger.”

What are you gonna do with people?

You can read this whole section of Davis’s book in Google Books here.

Comments

6 responses to “Humans are Pathologically Nuts: Proof Positive”

Is it greed at work? Or avoidance of humiliation for trying to cooperate and getting screwed?

(French: laisser tomber. Love that.)

I’d say foolish pride.

[…] a recent post I pointed out that Humans are Pathologically Nuts. In particular they’re forever playing […]

If the payoff’s in something of actual, then yes, it’s utterly irrational. But if the payoff is just score, then some people might have been confused about the object of the game, i.e., “get the highest score possible” vs. “get a higher score than the other person”.

[…] Morton Davis, Game Theory, a Nontechnical Introduction. Stands out on this list cause it’s not one of those “big” books. Available in a shitty little $10 Dover edition. But it’s an incredibly engaging walk through the subject, full of surprising anecdotes and insights. And he does all the algebra for you! The stuff in here makes all the other books above, better, cause they’re all using some aspects of this thinking. Here’s an Aha! example I wrote up: Humans are Pathologically Nuts: Proof Positive. […]

[…] Morton Davis, Game Theory, a Nontechnical Introduction. Stands out on this list cause it’s not one of those “big” books. Available in a shitty little $10 Dover edition. But it’s an incredibly engaging walk through the subject, full of surprising anecdotes and insights. And he does all the algebra for you! The stuff in here makes all the other books above, better, cause they’re all using some aspects of this thinking. Here’s an Aha! example I wrote up: Humans are Pathologically Nuts: Proof Positive. […]